IDK how common this is, but there are stories of companies that make you work on real production code in the interview. Basically suckering you into free work before they give the position to the boss’s cousin or something.

Combating artificial intelligence with natural stupidity.

- 29 Posts

- 198 Comments

13·17 days ago

13·17 days agoIs this satire or real? Genuinely can’t tell because every real AI Bro startup reads like satire.

82·25 days ago

82·25 days agoHumans can barely write safe C code, so I definitely don’t trust AI to. I’m not even blanket against AI assistance in programming, but there are way too many hidden landmines in C for an LLM to be reliable with.

Just write the Rust build configuration as a Rust struct at this point.

20·28 days ago

20·28 days agoJust put “Precondition: x must not be prime” in the function doc and it’ll be 100% accurate. Not my fault if you use it wrong.

12·29 days ago

12·29 days agoYeah, reading it back I realize I was being an ass. I apologize.

1·29 days ago

1·29 days agodeleted by creator

12·30 days ago

12·30 days agoHow the fuck does a tech company as big as Amazon have this running in production and not a test/staging environment? This is the kind of mistake a platform run by one person makes and even then they probably won’t make it again.

Huh, something tells me that working in FAANG doesn’t actually make you a better engineer, they just have more money to not throw at R&D which somehow makes you feel superior to every other engineer 🤔

152·30 days ago

152·30 days agoBuses. They’re called buses.

32·1 month ago

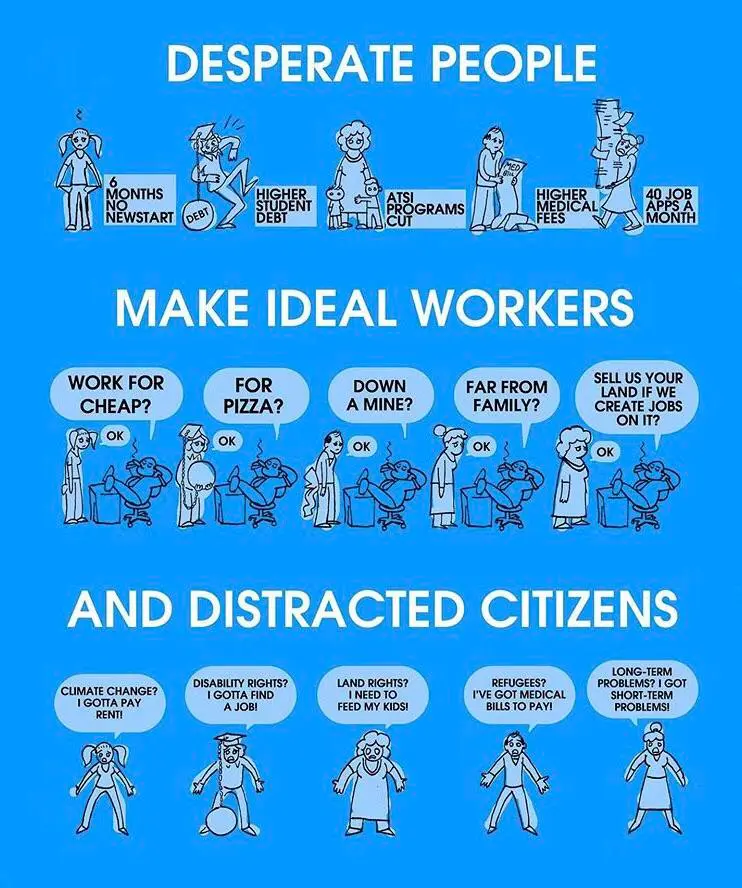

32·1 month agoFor-profit cororate tech: Goes down all the time and horifically inefficient despite having trillions to throw at R&D

Some random critical infrastructure open source project with three maintainers basically working for free: The most goddamn robust and optimized code to ever touch your silicon.

3·1 month ago

3·1 month agoUnlike C++ though, explosives usually degrade over time and become less dangerous.

Wym? You mean you don’t like typing out

unsigned long longa hundred times?

main =This message was brought to you by the Haskell gang

let () =This message was brought to you by the OCaml gang

This message was brought to you by the Python gang (only betas check

__name__, assert your dominance and force every import to run your main routine /s)

12·1 month ago

12·1 month agoThey’re talking about your commute on the highway to some office park in the middle of nowhere.

42·1 month ago

42·1 month agoUnrelated, but anyone else think it’s really weird that we just casually accept our food utensils containing chromium? Like, I know it’s an alloy and not just free chromium, but would we accept a lead alloy spoon? Probably not, especially with most food being acidic. Honestly I’m just waiting for the paper that says we’ve been slowly poisoning ourselves with stainless steel every time we eat.

11·1 month ago

11·1 month agoSo that’s what all the DRAM they scalped is storing.

*Junior devs

Senior devs are more likely to write one liners from their VIM window.

What about the IP issues? Not even talking about the “ethics” of “ip theft via AI” or anything, you just know a company like Microsoft or Apple will eventually try suing an open source project over AI code that’s “too similar” to their proprietary code. Doesn’t matter if they’re doing the same to a much greater degree, all that matters is they have the resources to sue open source projects and not the other way around. If a tech company can get rid of the competition by abusing the legal system, you just know they will, especially if they can also play the "they’re knowingly letting their users use pirated media that we own with their software” card on top of it.

Is this not against GDPR? I feel like this would be a slam dunk case.